How Our Engineer Found a Vulnerability in the ChatGPT API — and What It Says About Our Approach to Security Testing

By Joshua Christman, COO & Chief Engineer, Open Security

opensecurity.com

Seeing Vulnerabilities Differently

When you spend your days leading a team of offensive security engineers, you start to recognize individuals’ skill with pattern recognition, gaining an ability to look at new technology and immediately sense how it might break.

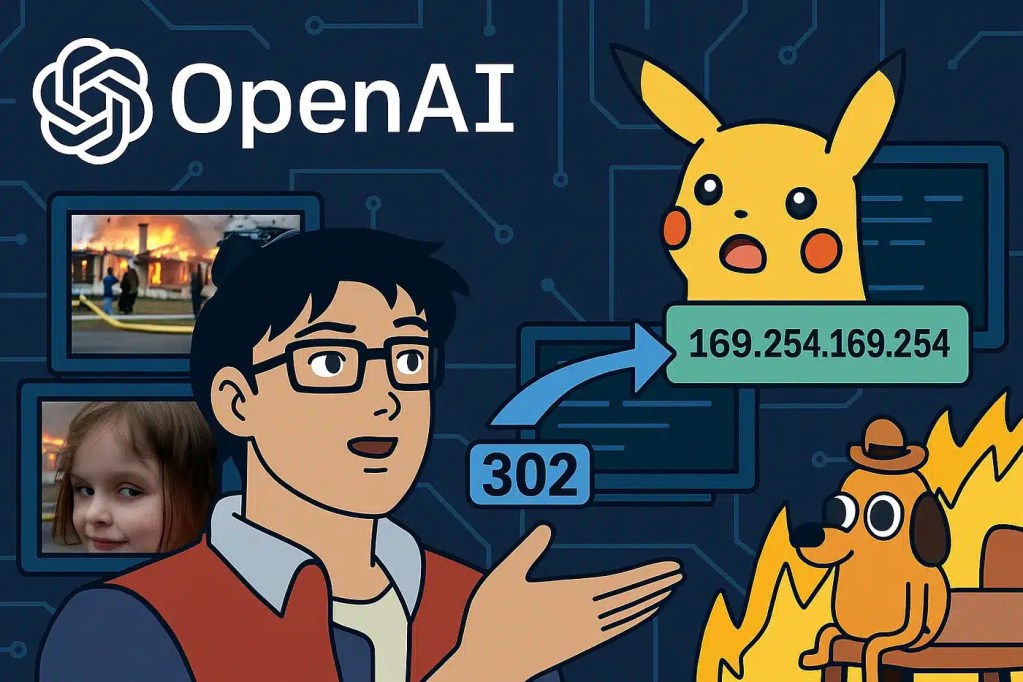

A few weeks ago, one of our engineers, Jacob Krut, uncovered a Server-Side Request Forgery (SSRF) vulnerability in OpenAI’s ChatGPT API. What made this discovery interesting wasn’t luck or brute force; it was instinct, creative reasoning, and disciplined testing.

Jacob’s technical write-up (When GPTs Call Home: Exploiting SSRF in ChatGPT’s Custom Actions) dives into the mechanics of the exploit. But from my perspective as COO and Chief Engineer at Open Security, this finding reflects something deeper about how our team operates: we test systems by thinking like the people who might someday exploit them.

The Discovery: Curiosity Meets Engineering Rigor

The finding started not during a formal bug hunt, but as an exercise in curiosity. Jacob was exploring ChatGPT’s Custom GPT feature, which allows users to define external APIs that their GPTs can call. Where most people see a productivity feature, our engineers see an attack surface, which is what Jacob did with ChatGPT. His testing led him to identify a way the API could be manipulated to send internal network requests, the hallmark of an SSRF vulnerability. Through careful experimentation, he confirmed that it was possible to pivot this behavior to access Azure’s internal metadata service, a sensitive cloud endpoint that exposes tokens and environment data.

That chain of reasoning (curiosity > hypothesis > controlled proof) exemplifies what we value most: technical depth and creative persistence grounded in methodical process.

Why SSRF Matters

SSRF vulnerabilities are not new, but in modern cloud architectures they’ve evolved into some of the most high-impact, low-visibility threats. When a service implicitly trusts its own outbound requests, it can become an unintentional proxy into internal or cloud resources. That’s exactly the kind of issue that can bypass external controls and turn into lateral movement or data exfiltration during a breach.

Our engineers encounter these conditions regularly in enterprise environments and use this same combination of creativity and precision to uncover flaws that traditional scanners or checklist-based testing never detect.

What This Says About Our Approach

At Open Security, our penetration testing philosophy is simple: real adversaries don’t follow scripts, and neither should we.

Our engineers are trained and encouraged to:

- Think beyond the “expected” use of a system.

- Identify and chain subtle weaknesses into meaningful impact.

- Treat every test as an exploration, not a formality.

- Turn over every rock looking for bugs

That mindset is what allows us to deliver results that go far deeper than vulnerability scans or compliance-driven testing. It’s why our clients trust us with their most critical applications, APIs, and cloud infrastructures.

Methodology Over Luck

Findings like this don’t come from chance, they come from methodology.

Every engagement we perform is grounded in structured reconnaissance, hypothesis-driven exploitation, and iterative validation. When our team approaches a new application, we combine the curiosity of researchers with the discipline of engineers. We document each observation, verify each assumption, and push until we understand why something works, not just that it works.

That’s how we’ve uncovered vulnerabilities ranging from cross-cloud privilege escalation chains to multi-tenant data exposure in real-world client environments.

Learn More About Our Testing Services

If your organization builds or deploys complex web or cloud systems, this is the level of rigor you want on your side.

Our Application and API Penetration Testing services, along with our Vulnerability Management Services use the same techniques and mindset that led to this ChatGPT finding, designed to help you identify, understand, and fix vulnerabilities before attackers ever find them. Let’s have a chat to see what application security needs you might have.

For a deeper look into Jacob’s technical process and the exploit chain itself, read the full article. When GPTs Call Home: Exploiting SSRF in ChatGPT’s Custom Actions.

Joshua Christman

COO & Chief Engineer, Open Security

opensecurity.com